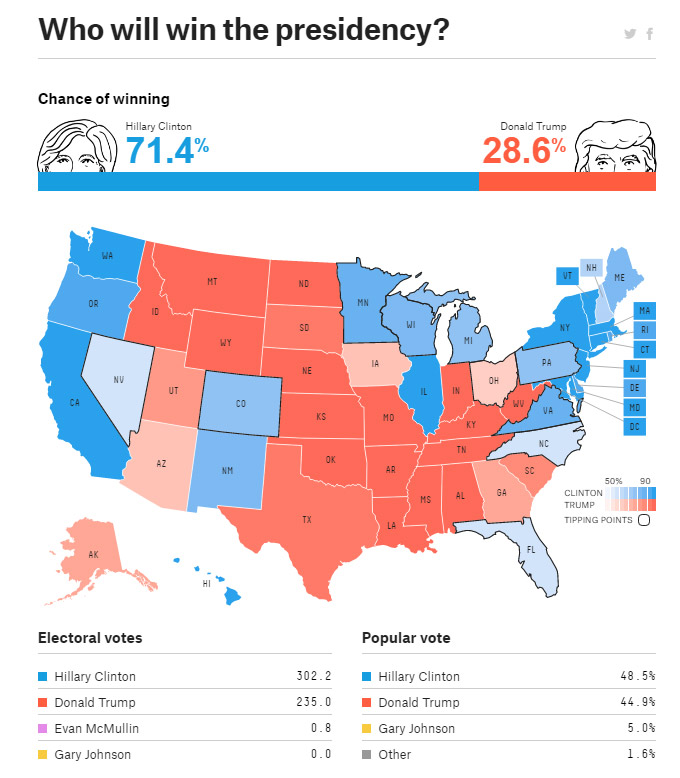

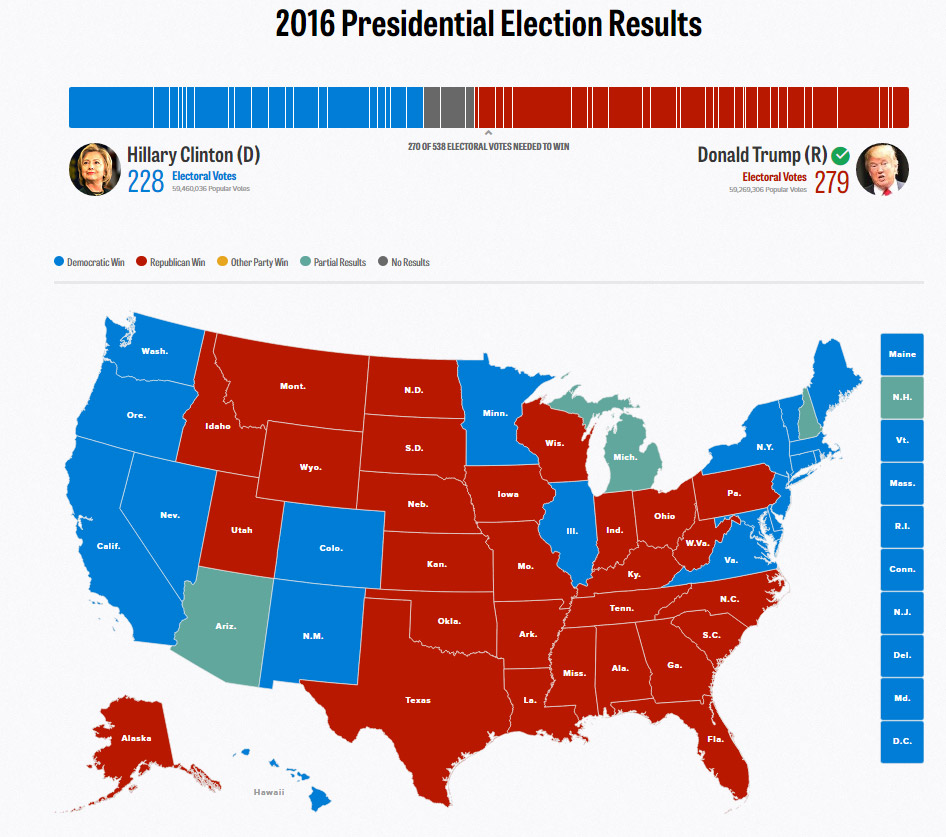

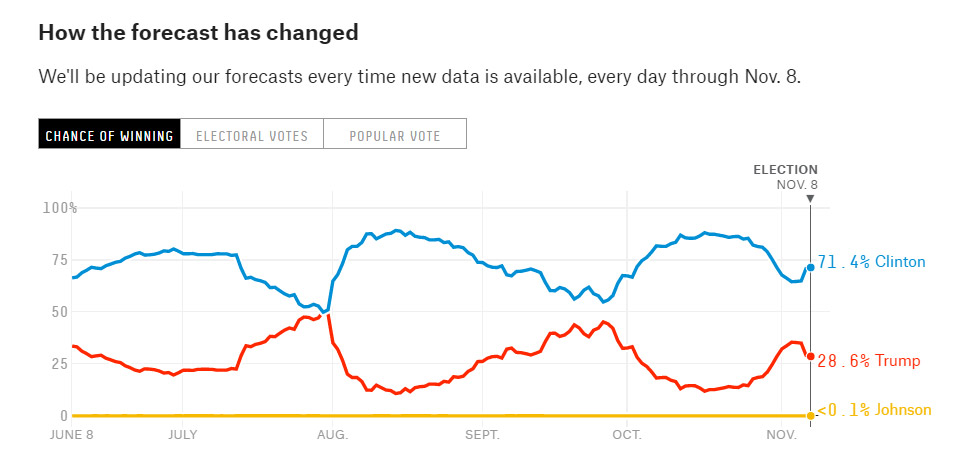

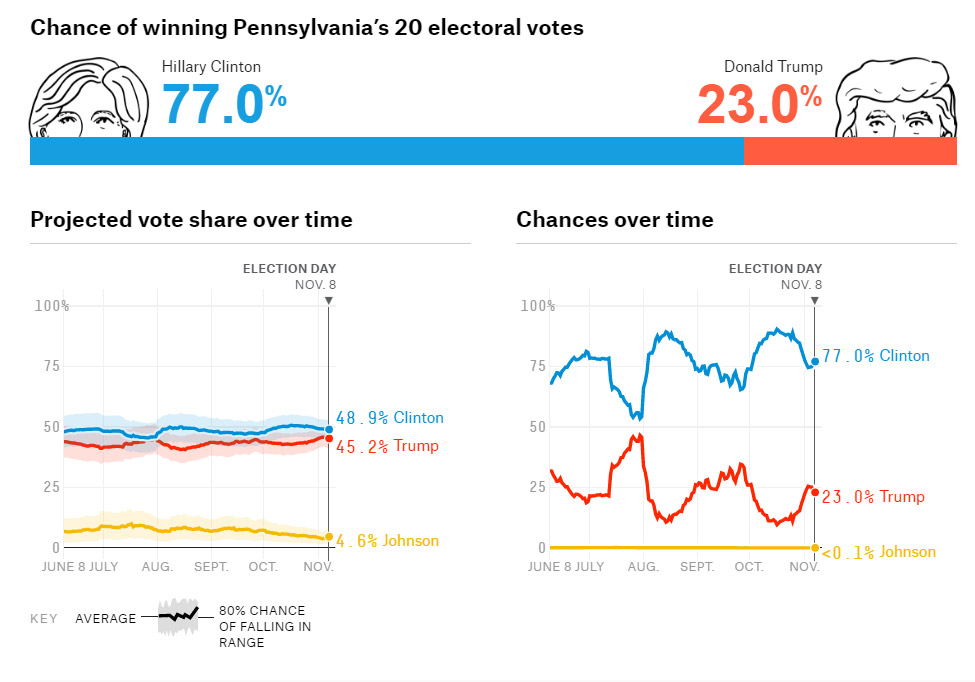

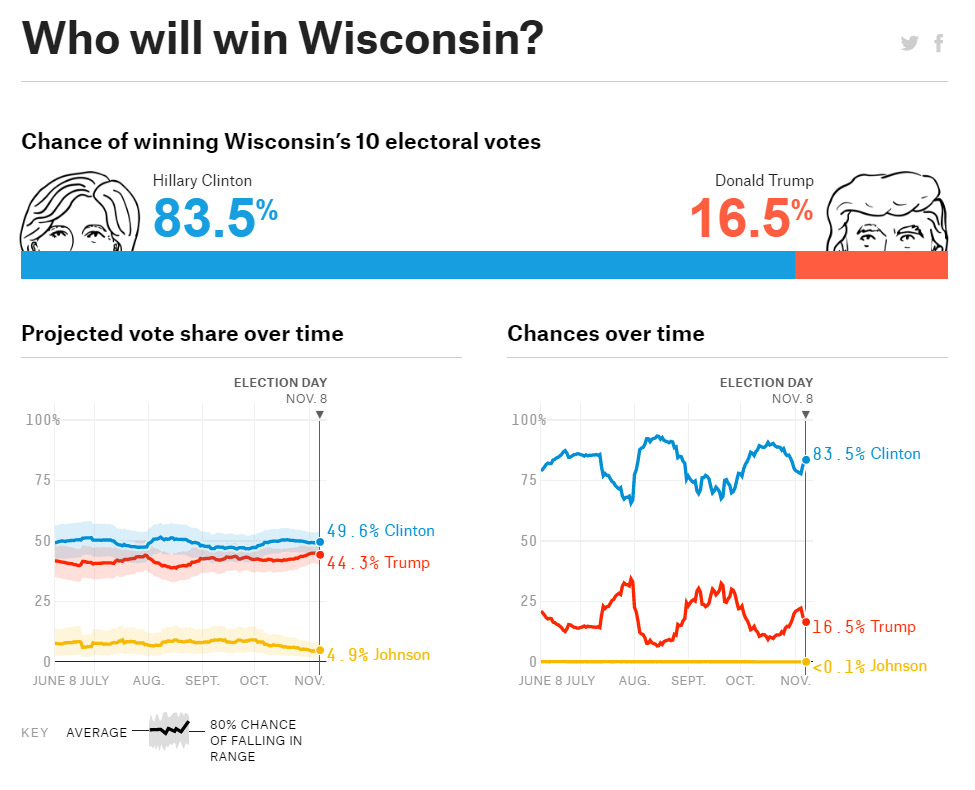

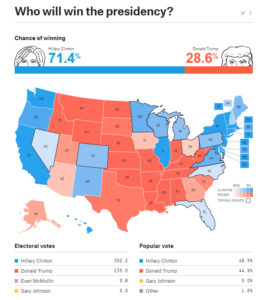

The US election result came as a big surprise to me; as to most who have put their faith in polling and statistical analysis predicting the outcome. Nate Silver and his company FiveThirtyEight is widely acknowledged to have some of the best analysis in the business; and it is a HUGE business. As the election opened, his final prediction, was a 71,4% chance of a Clinton victory, where she would get 302 electoral votes. With 34 electoral votes still to be decided, (and Clinton only set to get 4 of them) she has got 228, a far cry from the prediction. Something went terribly wrong in the polling and analysis.

The US election result came as a big surprise to me; as to most who have put their faith in polling and statistical analysis predicting the outcome. Nate Silver and his company FiveThirtyEight is widely acknowledged to have some of the best analysis in the business; and it is a HUGE business. As the election opened, his final prediction, was a 71,4% chance of a Clinton victory, where she would get 302 electoral votes. With 34 electoral votes still to be decided, (and Clinton only set to get 4 of them) she has got 228, a far cry from the prediction. Something went terribly wrong in the polling and analysis.

A good aspect of politics and sports is that both are heavy users of data collection like polling and subsequent analysis… AND, crucially, we get the true result, that can be used to compare the predictions. This is unlike most scientific studies in academia, where we assume the results of our analysis is correct. What can we learn about the shortcomings from a setting where money, interest and access to data is next to unlimited?

Some thoughts:

- A probability of 71% for Clinton indicates that 3 out of 10 elections will end with a Trump victory. Even 80% probability means than 1 out of 5 times, Trump would win. Not likely, but both more likely than getting a 6 when rolling a dice.

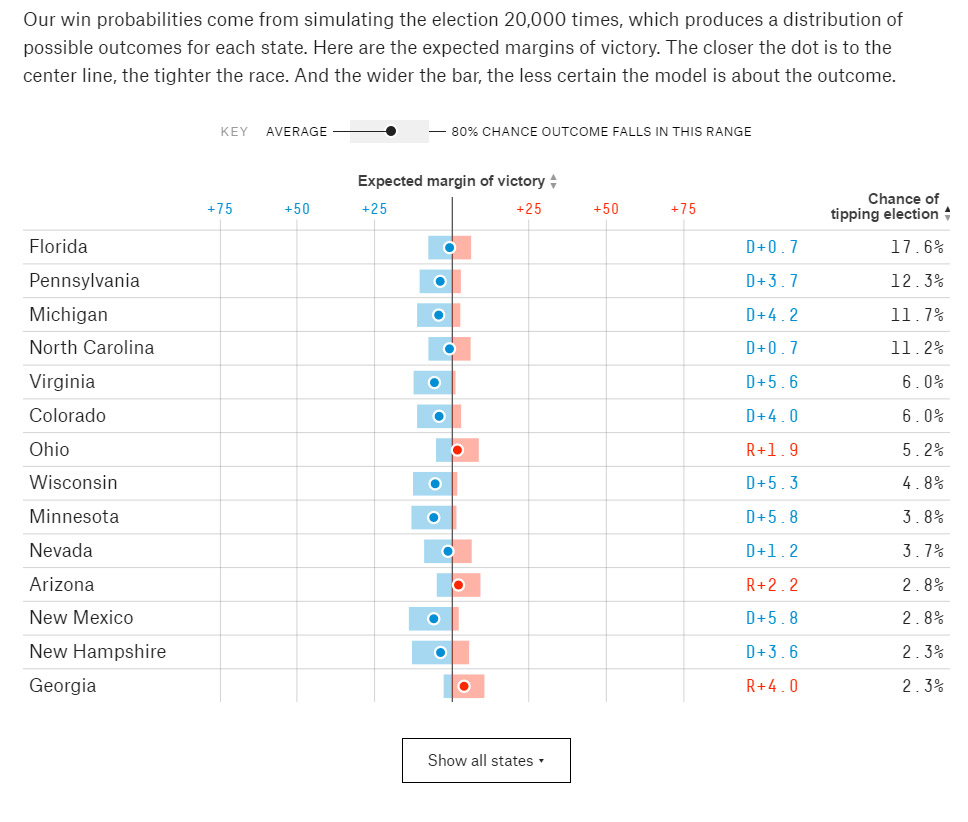

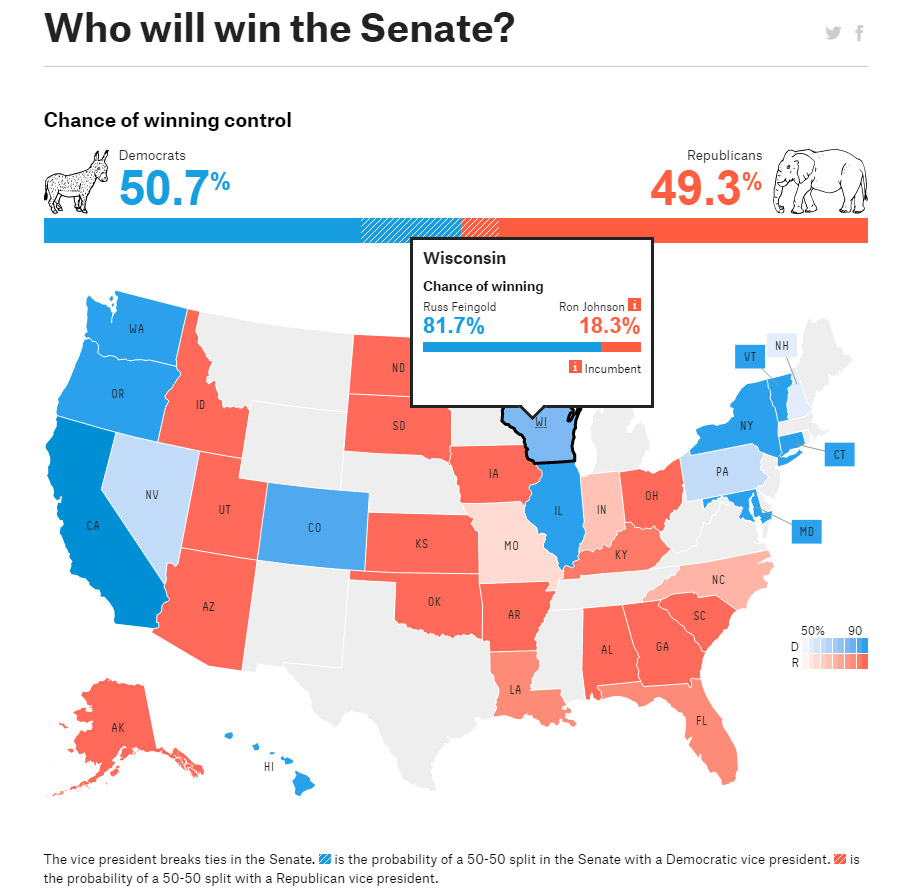

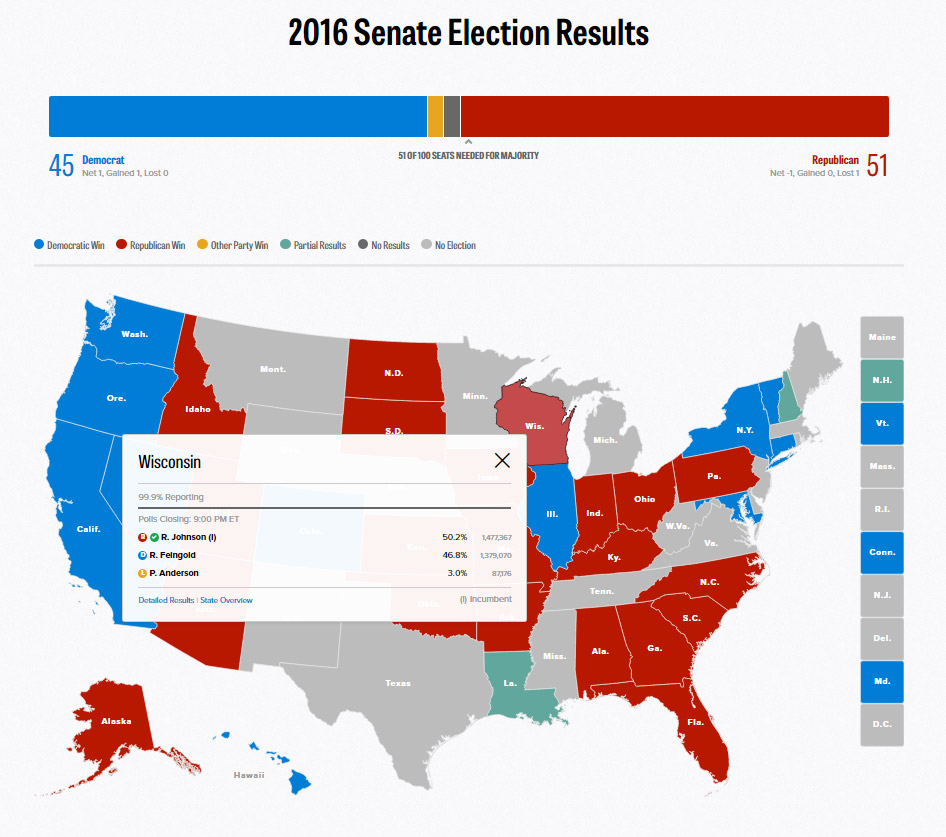

- Errors were largely treated as uncorrelated. Meaning that if there were polling errors in one state, such as an underrepresentation of angry white men in Pennsylvania, would not mean that the same error was likely in states close by, such as Wyoming and Michigan.

- Difference between what respondents say, and what they do. When answering a survey, respondents are influenced by what they think they should answer; rather than what they believe to be true. Supporting trump was socially unacceptable for many, as virtually all news outlets supported Clinton and condemned Trump. This created what some call “leaners”, people who only admitted supporting Trump when leaning in and whispering it.

- The sample was not representative of the voting population. While there were advanced models, correcting for likely voters, proportions of population by age and gender, income and race, these models turned out to be flawed

- There were important confounders. There has been some talk of the “ground game”, as in how well the local parties have been at getting their supporters to the voting booth; and related, on state and local laws making it more or less difficult for certain segments of the population to vote. (eg. Several hour long lines to vote in urban areas; and restrictive voter ID laws, targeting minority populations) These can have large effects locally, tipping the scale one way or the other, but are not accounted for in the models; possibly because the effects are hard to measure. While difficult to include in a model, they are no less important.

- Statistical analysis are mathematical concepts based on probability. The equations give an answer regardless of the quality or relevance of the numbers put in. It is up to the researcher to determine whether the method suits, to choose the correct variables and collect data. It is tempting to omit those too hard to collect, or use proxies that are easy to collect, in place of more correct ones. In this election case: contextual variables were ignored, as were psychological ones, such as how people react to various behavior and fear.

- (See Dan Ariely on a related example: When you go to a bank and ask “How much should I borrow to buy a house, they answer the question of “how much CAN I borrow” a much easier question, that does not deal with personal preferences)

- It is tempting for researchers to use such statistical tools, as they are available, and give the perception of accurate results. Qualitative research can require more work and expertise to do well; and can seem less reliable than percentages. The question remains; do researchers understand the limitations of the quantitative tools they employ?

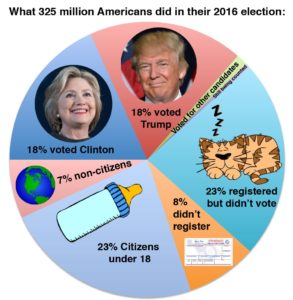

- Response rate can limit what inferences which can be drawn. In this election the turnout rate was about 57%. Of these, Trump got 47,5%; or put differently, 27% of the electorate actively supported Trump. Are these representative for the country as a whole?

The question researchers need to ask themselves, colleagues and peers is: when do we fall foul to there errors in our research? Are we careful enough with our methodology? Is a p-value of 0,05% good enough? Do we fully understand what it means?

An analysis of Tweets during the election, done at MIT, show how the electorate was highly polarized, and further, how virtually no journalist was part of the Trump twitter conversation. (The source article is lined at the bottom, and has some beautiful network analysis illustrations!)

Click on the image below to scroll though key maps of predictions and results

For sources see:

2016 Election Forecast | FiveThirtyEight

Nate Silver’s predictions and polling data for the 2016 presidential election between Hillary Clinton and Donald Trump

Live Election Night Forecast

Throughout this evening, we’ll be updating election night forecasts as states are called for presidential and senate candidates. To clear up any misinterpretations, we’re not trying to project states based on partial returns. So if (for example) Trump is leading Missouri by 5 percentage points with 40 percent of precincts reporting, that won’t matter to the model.

2016 Election Results: President Live Map by State, Real-Time Voting Updates

POLITICO’s Live 2016 Election Results and Maps by State, County and District. Includes Races for President, Senate, House, Governor and Key Ballot Measures.

(This NYT article came a day after this article.. )

How Data Failed Us in Calling an Election

The election prediction business is one small aspect of a far-reaching change across industries that have increasingly become obsessed with data, the value of it and the potential to mine it for cost-saving and profit-making insights. It is a behind-the-scenes technology that quietly drives everything from the ads that people see online to billion-dollar acquisition deals.

Journalists and Trump voters live in separate online bubbles, MIT analysis shows

When Donald Trump swept to victory in the Electoral College on Nov. 8, perhaps no group was more surprised than journalists, who had largely bought into the polls showing Hillary Clinton was consistently several percentage points ahead in key swing states.

19 Things We Learned from the 2016 Election – Statistical Modeling, Causal Inference, and Social Science

OK, we can all agree that the November election result was a shocker. According to news reports, even the Trump campaign team was stunned to come up a winner. So now seemed like a good time to go over various theories floating around in political science and political reporting and see where they stand, now …