Easy to read article in Nature covering the severe limitation of the P-value and explains why P-fishing is a dangerous road.

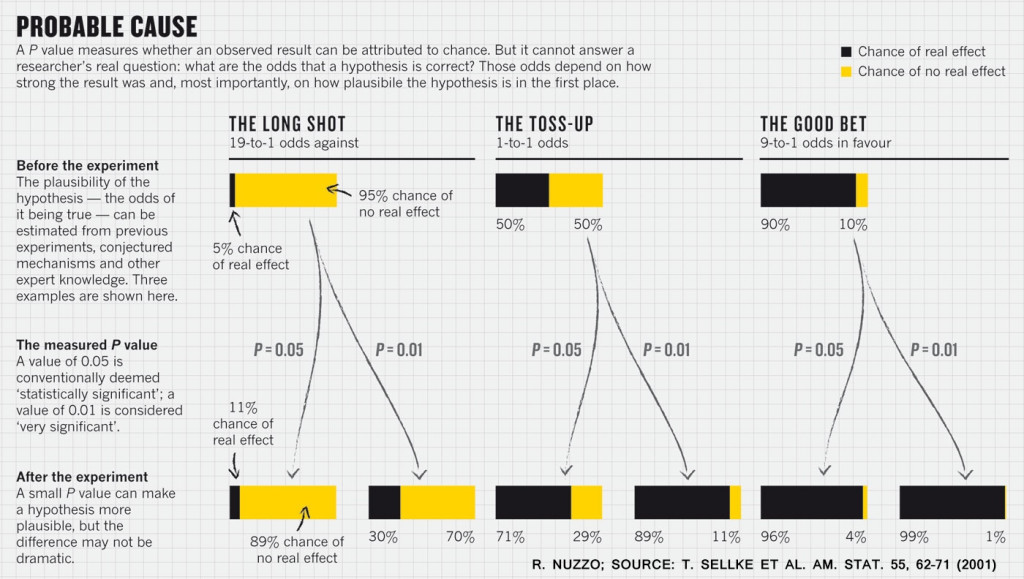

The key take-away is that while the P-value can say something about the probability of the a specific null hypothesis in a given data; it does not directly state the probability of the findings in general. To say whether the findings are likely to be true, one also needs to know the proability of there actually being a real effect in the first place.

Some good extracts from the article:

Critics also bemoan the way that P values can encourage muddled thinking. A prime example is their tendency to deflect attention from the actual size of an effect. Last year, for example, a study of more than 19,000 people showed8 that those who meet their spouses online are less likely to divorce (p < 0.002) and more likely to have high marital satisfaction (p < 0.001) than those who meet offline (see Nature http://doi.org/rcg; 2013). That might have sounded impressive, but the effects were actually tiny: meeting online nudged the divorce rate from 7.67% down to 5.96%, and barely budged happiness from 5.48 to 5.64 on a 7-point scale. To pounce on tiny P values and ignore the larger question is to fall prey to the “seductive certainty of significance”, says Geoff Cumming, an emeritus psychologist at La Trobe University in Melbourne, Australia. But significance is no indicator of practical relevance, he says: “We should be asking, ‘How much of an effect is there?’, not ‘Is there an effect?’”

In an analysis10, he found evidence that many published psychology papers report P values that cluster suspiciously around 0.05, just as would be expected if researchers fished for significant P values until they found one.

Statisticians have pointed to a number of measures that might help. To avoid the trap of thinking about results as significant or not significant, for example, Cumming thinks that researchers should always report effect sizes and confidence intervals. These convey what a P value does not: the magnitude and relative importance of an effect.

Read the whole article at: http://www.nature.com/news/scientific-method-statistical-errors-1.14700#/b1